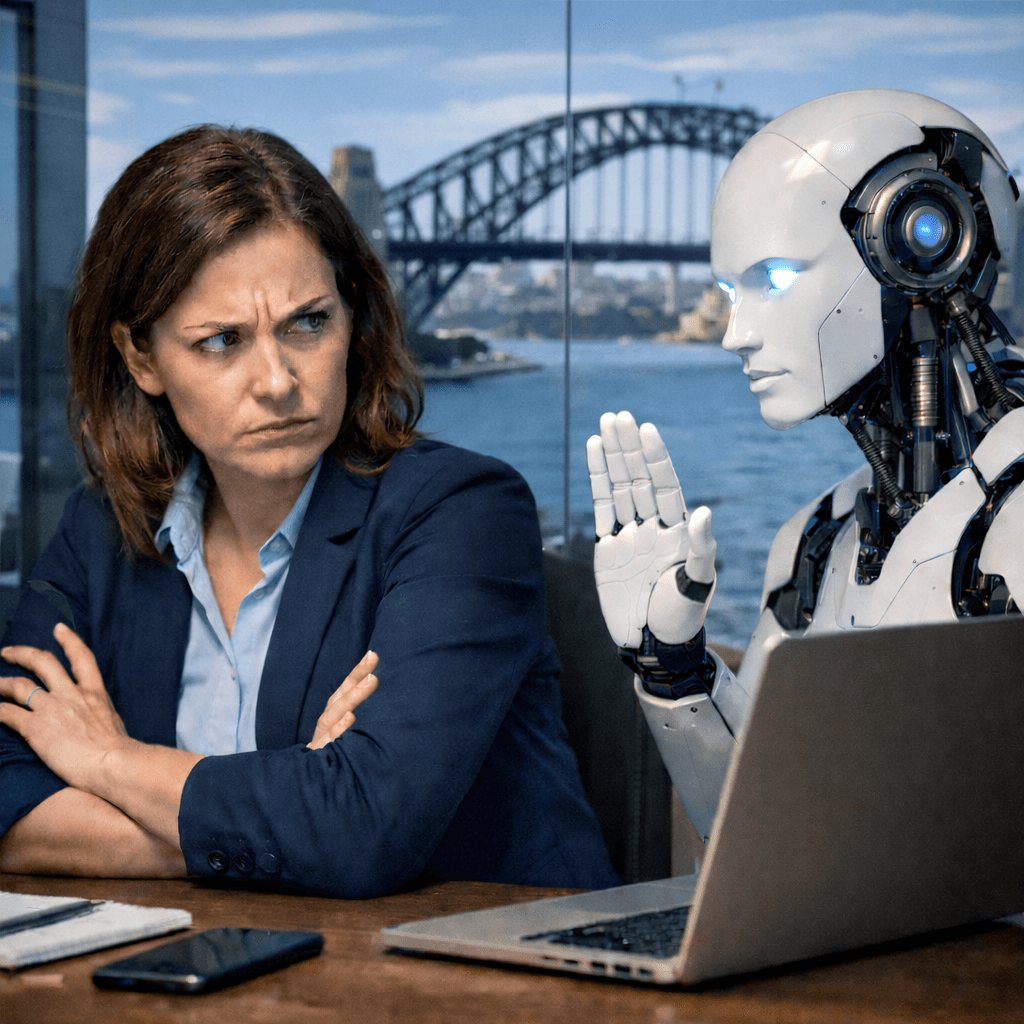

Why You Shouldn't Just Trust AI Blindly

Why You Shouldn't Just Trust AI Blindly

As the saying goes, "trust comes with time," and in time, you might be able to trust AI. But in its current form, there is a lot not to trust — let me explain. Technology is fallible, and AI is not immune to this. The term "AI hallucination" was coined to describe exactly this, whether AI is confidently telling you an answer that is not true, you are trusting AI with sensitive data, or even trusting AI agents to connect to your business-sensitive vendors and perform actions on your behalf. There is a lot of "trusting."

Trusting the AI Provider

With the multiple providers offering AI services, you are "trusting" that the provider is secure and protects the data you send it, whether it be simple prompts or, at the extreme, sensitive financial records. You are trusting that the providers of AI store that data safely and securely. Some of these providers are huge, and with that they are large targets for adversaries to attack, with the goal of harvesting very sensitive information and the possibility of being able to attack those companies. Compliance records only get you so far from the large providers. A lot of these are tick-box exercises run by external auditors who are new to the auditor/cyber field, have limited technical knowledge with no business context, and are running through tens of companies per year. Adversaries of these AI providers are using the exact tools they built against them by running automated scans across all public-facing endpoints, looking for the smallest weakness they can expose and thus pivot from to get deeper into the AI provider's network. Once in, the adversaries will be looking for any sensitive data held and will use the AI provider's own AI to go through the hundreds of petabytes of data.

Trusting the AI Agents

AI agents are so effective I can hardly believe it. They can literally take hours of work and distil it down to five minutes at "expert level," so I see why the hype for them is so strong right now. But unfortunately, this comes with even more risk. That AI agent will be passing credentials from your MCP config through the AI provider's LLM (and storing it there) to connect to the system, such as GitHub. So the first risk off the bat is credentials held at the AI provider. The only mitigation to this is having the credentials locked to a particular source location/IP, but that is "if" the provider has that functionality. The second risk is that AI agent has potentially full rights to the system you connect to, and with the wrong or misinterpreted prompt, the AI agent might do something drastic and unintended in that system and have unrecoverable or business-impacting downstream consequences, as seen here and here and here, and no doubt plenty more that go unreported.

Where NeverTrust.ai Can Help

At NeverTrust, we built our platform around a fundamental principle: AI cannot be trusted to self-govern. That is not a criticism of the technology; it is an honest assessment of how current systems work. Our platform gives organisations the tools to:

- Stop data before it is sent to the AI provider, passing it through our machine learning and policies to block the data flow before it is too late. This helps protect against the first scenario.

- Intercept AI agent actions before they cause harm, stopping them before they do something catastrophic and cause business outages or worse.